A Glossary of System Failure

Understanding the polycrisis of the 21st century through economic jargon.

The English language is missing a word and we’re all suffering for its absence. It’s easy to dismiss conversations about language and terminology as semantic exercises which get in the way of the ‘real’ issue. But semantics are important and precise language matters because if you can’t neatly describe a problem you don’t have much hope of solving it. For lack of a better term, we’ll refer to this problem as ‘system failure’.

Understanding system failure is an urgent task because the modern world is governed by a web of bureaucratic entities. These organisations might be government departments, public service providers, private businesses , non-profits or some combination of all of the above. But, regardless of their charter, they generally serve some sort of useful social function and they’re generally staffed by conscientious people who aren’t trying to inflict misery on the wider public.

And yet these same organisations often produce egregious outcomes. The old axiom that the whole is greater than the sum of its parts doesn’t seem to hold true for our bureaucracies. Most of the big organisations we have to deal with seem much less capable as an insitution than any of the individuals that work for them. Despite all the brainpower and resources at their disposal, bureaucracies fail in ways that are somehow both baffling and predictable.

The consequences of bureaucratic failures vary wildly. Most of the time, irrational bureaucratic policies are more of an annoyance. We’ve all been railroaded by ‘customer service’ departments or sent in circles by a website while trying to cancel a subscription. Anyone who’s ever filled out a loan application or applied for an insurance policy has dealt with the frustration of squeezing their complex personal circumstances into whatever crude template the company uses to evaluate eligibility. These low-level frustrations are well documented by the likes of Douglas Adams (Hitchhiker’s Guide) and Scott Adams (Dilbert).

But large organisations also produce policies and processes that are wildly unethical (if not illegal). During Australia’s 2017 Royal Commission into misconduct in the financial services sector it emerged that many banks and superannuation providers were charging members for financial advice they weren’t actually providing. The banks’ decision to automatically enroll customers in ongoing ‘fee for service’ arrangements allowed them to rake in tens of millions of dollars each year while their reliance on manual record-keeping processes and defective internal systems meant that they had no way of knowing whether any advice was actually being provided. In many cases the customers never had an advisor assigned to them and, in some cases, they were still being charged fees despite having been dead for ten years. For the last century our literary touchstone for this sort of absurdity has remained Franz Kafka.

Similar processes have contributed to the failure of major IT and infrastructure projects. The Australian government’s recent redesign of the Bureau of Meteorology (BoM) website is only the latest boondoggle. That project took six years and cost $96 million dollars and delivered such a poor user experience that some observers warned that it could put lives at risk. Earlier project management failures led to massive cost overruns and technical problems for the Victorian government’s MyKi public transport ticketing system and Queensland Health’s payroll system. These sorts of fiascos are grist for the mill at Working Dog Productions (The Hollow Men, Utopia et al.).

In the worst case scenario organisations commit to decisions that lead directly to death and destruction. In 2017, aircraft manufacturer Boeing began rolling out the latest version of their 737 airliner. Despite some fundamental changes to the design of the aircraft the company decided to treat the 737 MAX as simply the latest iteration of a well-established airframe rather than an entirely new aircraft. This decision meant that pilots wouldn’t require additional certification but it also meant they wouldn’t receive any real training on the new features of the MAX. This decision led to two crashes which claimed the lives of 346 people. To understand these sorts of failures you have to dive into the essays of Kyra Dempsey (AKA Admiral Cloudberg).

The common thread running through all these examples might not seem obvious but, in each case, the people managing those organisations collectively landed on a policy that no reasonable individual would ever recommend. Nobody in the superannuation industry consciously set out to defraud their members and there was no working group in the federal government dedicated to sabotaging the BoM website. Needless to say none of the executives at Boeing would have gambled the reputation of their company on a production deadline if they knew it was going to get hundreds of people killed. Which is not to say that the people running these organisations have the public’s best interests at heart. They’re just not reckless enough to intentionally run those particular risks.

As tempting as it is to vilify those at the top of the org-chart, the reasons for these failures are usually tangled up in web of internal policies and incentive structures that are very difficult to untangle. For that reason, the people who investigate these failures often find it difficult assign responsibility to a single person (or even a discrete decision-making body).

Such inquiries almost always yield very unsatisfying 1,000 page reports that criticize the offending organisation for a ‘pervasive culture of complacency’ or point to failures in ‘compliance processes’. Inevitably, these reports will recommend greater investment in ‘risk and governance’ procedures’ to ensure ‘transparency and accountability’.

Of course, there are good reasons to be skeptical of this narrative. Especially when the people running the investigations turn out to have attended the same schools, universities and clubs as the people running the organizations they’re tasked with regulating. But even the most cynical observer would agree that large organisations produce their own internal dynamics that are hard to discern and hard to wrangle from above.

If we take the verdicts of all those inquiries at face value then it would appear that systemic failures in large organisations share a few key risk factors.

- Unrealistic deadlines or sales targets

- Inflexible processes or policies

- Inaccurate reporting

- Inadequate supervision or regulation

- Lack of communication between internal departments

The problem is that we don’t have a neat term for what happens when all these factors coincide. Lawyers and academics sometimes refer to these incidents as examples of ‘system failure’ but that term has always seemed a little too vague – conflating cause and effect and excusing those who might otherwise bear some responsibility. In order to fit the bill we need a term we that’s a little more accusatory. It should describe those situations where a failure of imagination is compounded by a failure of oversight. The resulting mess, then, is not quite an accident and not quite a conspiracy. Instead it’s something much closer to negligence.

And this phenomenon should have its own label because it needs to be distinguished from situations that are superficially similar but much more easily diagnosed. For instance we’re not talking about failures due to flagrant incompetence (Fyre Festival), outright fraud (Theranos) or genuinely unforeseen circumstances (the Ever Given). Neither are we talking about the malicious use of bureaucratic systems like Scott Morrison’s Robodebt scheme which attempted to extort welfare recipients. Rather we’re talking about the sorts of unintentional failures that ought to have been foreseeable but were, for one reason or another, invisible to those in charge.

We might not have a neat label for these sorts of failures but we do have terms that describe their warning signs and their symptoms. In fact there’s a whole discipline dedicated to understanding how and why large organisations make decisions known as ‘systems analysis’. This field was an outgrowth of wartime ‘operations research’ and it was codified in the 1950s by the thoroughly sinister RAND Corporation – a military/industrial think tank formed in the aftermath of WWII to coordinate Research AND Design (hence ‘RAND’) for the U.S. Air Force.

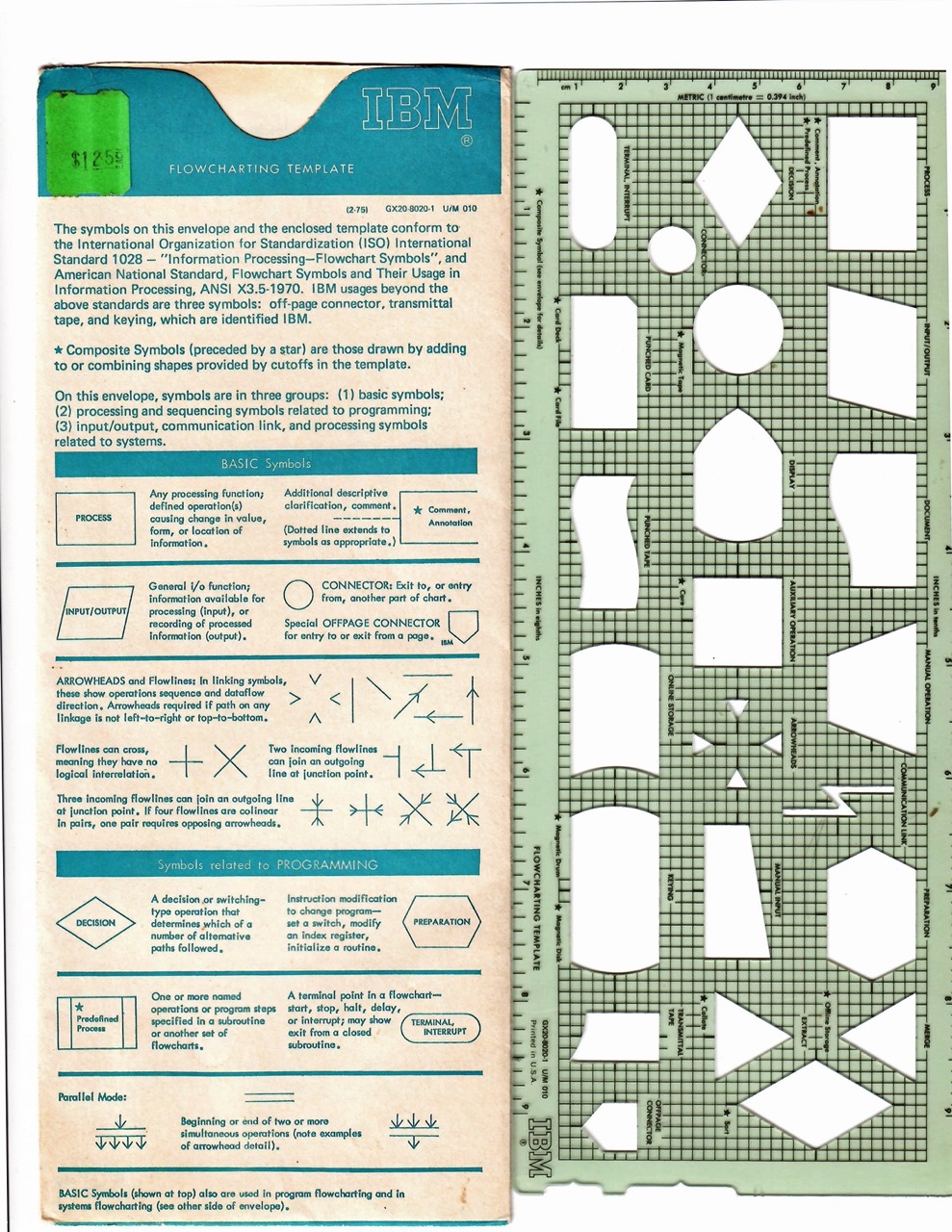

Broadly defined, systems analysis is a method for making decisions that attempts to break a problem down into its constituent parts so as to determine the best course of action. Some of the techniques used by early systems analysts will be very familiar to anyone who has worked in a modern office (If you’ve ever drawn a flow chart, for example, you’ve dabbled in systems analysis). A stricter definition of the term would be nice but even the people who coined the phrase ‘systems analysis’ couldn’t quite decide what it meant. In a presentation given to U.S. Air Force personnel in 1956 RAND analyst Malcolm Hoag acknowledged that:

“A talk on Systems Analysis ought to begin with a definition of the term. Unfortunately no precise, commonly accepted definition exists. For the moment let me say merely that by Systems Analysis we mean systematic examination of a problem of choice in which each step of the analysis is made explicit wherever possible. Consequently we contrast systems Analysis with a manner of reaching decisions that is largely intuitive, perhaps unsystematic, and in which the implicit argument remains hidden in the minds of the decision-maker or his advisor”

While RAND was using systems analysis to calculate the cost effectiveness of nuclear war other think tanks were using it to address more practical concerns. The most optimistic branch of systems analysis was a movement known as cybernetics (from the Greek kybernētēs, meaning “steersman”) – which looked at how complex systems process information and adapt to their environment.

Arguably the most famous proponent of cybernetics was English theorist Stafford Beer who tried to apply cybernetic theories to the management of large organisations. In his bestselling book Brain of the Firm (1972) Beer used biological metaphors to describe the ways in which organisations self-regulate and respond to external shocks. His key theory was a recursive model of organisations known as the Viable System Model which could be applied just as easily to a small team of workers as it could to the department they belonged to, the overall organisation or society as a whole.

Beer’s explanations for how these Viable Systems work often veered off into abstraction but his basic insight was that large organisations are, in a very real way, autonomous. They take on information, they convey this information through various channels and develop their own mechanisms for coping with novel scenarios.

According to the Viable System Model the more people involved in a system the more complicated it becomes. And this increase in complexity is exponential. Thus Beer’s advice to managers was to treat any complex internal process as an unknowable ‘black box’. Rather than trying to peek under the lid to understand what was going on they should instead focus on what goes into the box and what comes out. So, for example, the senior management of a bank shouldn’t concern themselves with the nitty gritty of how a credit score is calculated. Instead they should keep an eye on what general information is collected in order to calculate the score while monitoring the volume of loan approvals to ensure the bank isn’t taking on too much bad debt.

Beer also codified a number of rules and principles for managing large bureaucratic organisations. He pushed for real-time data over canned reports and encouraged managers to run experiments and discard them if they didn’t work. Decades before it became the credo of the ‘agile’ workflow, Beer encouraged managers to ‘fail fast and fail often’.

In the early 1970s Beer was able to put some of his more ambitious management theories to the test when he agreed to help the recently elected President of Chile, Salvadore Allende, run his administration. The culmination of his work in Chile was a project called Cybersyn which treated the Chilean economy as one big Viable System which could be monitored from a central control room. This futuristic nerve centre was designed to receive up-to-date information from a network of Telex machines connected to more than a 150 of Chile’s state-run enterprises.

Cybersyn never quite got the chance to prove its worth because Allende was killed in a CIA-sponsored coup only a few years into his presidency. As a proof-of-concept Cybersyn was well ahead of its time but, as a solution to Chile’s economic turmoil, it was severely limited by the technology available.

The problem that Beer faced while working on project Cybersyn was how to establish monitoring systems that could collect and process enough data to make informed decisions. 50 years later we have more data than we know what to do with but our techniques for managing that complexity are still relatively crude (with LLMs representing a step backwards). Ultimately our theories of management and administration have not kept pace with the development of information technologies. There’s still no real consensus on how large organisations should be run and no standard procedures for avoiding decisions that cause misery and havoc.

As British economist (and would-be cyberneticist) Dan Davies pointed out in an interview with the Financial Times – large, sprawling bureaucracies are a relatively recent phenomenon. While the early years of the Industrial Revolution spawned some very complex administrative systems they were always limited by the technology available to them. There’s only so many reams of paper you can put through a typewriter and only so many punch cards you can fit in a filing cabinet.

It took the introduction of electronic computers and magnetic storage for the bureaucratic revolution to really take off. By the end of the 20th century, almost all private companies and public entities in the Western world had switched to using electronic databases for record keeping. Rather than making the task of administrating those systems more efficient digitization simply encouraged administrators to be more ambitious. Nowadays the smallest local councils in Australia are collecting data and processing information on a scale that would have made the mandarins of the Qing dynasty weak at the knees.

As Davies summed up in the FT interview:

“…in the space of less than a human life, we’ve gone from a decision-making model in which we were largely ruled by individuals — and sometimes we didn’t necessarily like those individuals but you knew who they were, you understood where the decisions were coming from — to one in which we’re mainly ruled by algorithms, either literally in the shape of computer programs or metaphorically in the shape of committees, rule books and little middle managers running around who claim that the decisions that they’ve just made wasn’t up to them, but was in fact made many years ago in a committee that you will never know anything about.”

So how do we wrap our heads around the new bureaucratic landscape we’ve found ourselves in? And how do we make sense of system failure if we don’t have the right words to describe what we’re up against? Although we may not have an exact term for the failures mentioned earlier we do have a number of other terms that can help us understand why large organisations make terrible decisions.

‘Moral Hazard’ (circa late 19th century)

One term that helps explain reckless decisions by otherwise conservative organisations is the concept of ‘moral hazard’. This term was picked up by the insurance industry in the late 19th century to describe situations where one party is incentivised to make risky decisions because the costs of those decisions will be born by another party.

The idea of moral hazard is derived from a very basic assumption – that we make different risk calculations when we have ‘skin in the game’. For example, car owners with comprehensive insurance policies might be more inclined to drive through rising floodwaters in order to get home. Or, at the very least, they might be more inclined to do so than someone who lacks insurance. In order to account for this moral hazard insurance providers put all sorts of exclusions on their liability and insist that customers pay expensive ‘excess’ fees for any claims.

This sense of being insulated from the consequences of your actions may explain reckless behaviour at an institutional level. In the lead up to the 2008 financial crisis investment bankers for some of the United States’ largest financial institutions gambled enormous sums of money on toxic mortgage-backed securities. They did so on the assumption that that they worked for organisations that were ‘too big to fail’. This instinct proved to be correct and the U.S. government spent billions bailing out the likes of Lehman Brothers and AIG.

As an explanation for system failure, moral hazard is a doubly useful term. On the one hand it helps explain why those working within a large organistion might make rash decisions. If the consequences of cutting corners in one department within an organisation will be born by those in another department (or by the general public) then the former group have very little incentive to care.

But moral hazard can also be used to justify the neglect of those who need assistance. Carried to its logical extreme it suggests that altrusitic actions always carry unintended negative consequences. The typical libertarian gripe goes something like this: ‘why should society offer welfare payments to the unemployed when we know that people are careless with money they didn’t earn?’. Or, in the words of one particularly mean-spirited British politician, “social responsibility is a euphemism for individual irresponsibility.”

As legal scholar Tom Blake wrote in his paper On the Genealogy of Moral Hazard:

By “proving” that helping people has harmful consequences, the economics of moral hazard justify the abandonment of legal rules and social policies that try to help the less fortunate; and, by providing a “scientific” basis for the abandonment of legal rules and social policies, the economics of moral hazard legitimate that abandonment as the result of a search for truth, not an exercise of power.

This framing of moral hazard is the underlying justification for ‘means testing’ – the complicated rules devised to determine eligibility for welfare assistance. And means testing will always be a minefield of system failure because the rules are, by necessity, very simple while our lives are infintely complicated. In most countries with bureaucratic welfare systems means testing has spawned a small industry of ‘support services’ that exist to help applicants navigate the welfare system.

Viewed from a distance these system appear to work as advertised. People in need apply for welfare, submit documents, jump through a few administrative hoops and mostly receive what they’re entitled to. Meanwhile, at ground level, the most vulnerable members of society are driven to breaking point by having to play an endless game of chicken with the thresholds for continued government support.

The goalposts are always moving because those administering the welfare system continue to tweak the eligibility requirements over and over again – producing more and more complex rules. These revisions help fulfill popular political objectives (namely- reducing welfare spending through minor forms of austerity) but the side-effect of greater complexity is an increase in non-compliance (due to mistakes, intentional fraud and people simply dropping off the welfare role due to fatigue). Which, again, seems like a good result according to the metrics compiled by the government but that’s only because they’re not measuring all the second-order effects of stranding people without welfare payments – misery, drug addiction, broken homes, ‘deaths of despair’ and all the social and economic costs that poverty entails.

‘Groupthink’ (circa 1950)

Another useful concept for understanding why decision makers might be prone to error is the idea of ‘groupthink’. While most explanations for system failure focus on the structure of the organisation (overlapping areas of responsibility, poor communication, rigid policies etc), groupthink attributes system failure to basic psychological impulses. The concept was first laid out in the 1950s by American sociologist William H. Whyte who borrowed a term from Orwell’s 1984 to describe the way in which like-minded people avoid second-guessing each other’s ideas.

Whyte’s idea of groupthink was somewhat counterintuitive. He observed that when groups achieve high levels of cohesion, individuals within that group become much less likely to raise objections to one another’s ideas or offer alternative ideas of their own. Shoving a bunch of experts into a room together may seems like a good way of solving a problem but if those people are more concerned with reaching a consensus – as opposed to solving the problem – then they’re probably going to end up in furious agreement with one another.

Psychologist Irving Janis further popularised the term in his book Victims of Groupthink* (1972) which looked at recent U.S. foreign policy fiascos like the failed invasion of Cuba via the Bay of Pigs in 1961 and the decision to commit ground troops to the war in Vietnam in 1964. According to Janis, blame for these disastrous foreign policy decisions lay in social dynamics that are both commonplace but also difficult to counteract. In Victims of Groupthink Janis laid out a whole diagnostic framework for groupthink which he defined as.

“…the mode of thinking that persons engage in when concurrence-seeking becomes so dominant in a cohesive ingroup that it tends to override realistic appraisal of alternative courses of action”

But to Whyte, groupthink was more than just the tendency to find common ground. Instead it was ‘a rationalized conformity – an open, articulate philosophy which holds that group values are not only expedient but right and good as well’. His most prominent contribution to the literature on management theory was a book called The Organization Man (1956) which opens with a description of what we would now call middle management.

“They are the ones of our middle class who have left home, spiritually as well as physically, to take the vows of organization life, and it is they who are the mind and soul of our great self-perpetuating institutions. Only a few are top managers or ever will be. In a system that makes such hazy terminology as “junior executive” psychologically necessary, they are of the staff as much as the line, and most are destined to live poised in a middle area that still awaits a satisfactory euphemism. But they are the dominant members of our society nonetheless. They have not joined together into a recognizable elite—our country does not stand still long enough for that—but it is from their ranks that are coming most of the first and second echelons of our leadership, and it is their values which will set the American temper.”

Whyte was disturbed by the way in which the ‘organization man’ seemed willing to jettison their own opinions in return for a stable career and a house in the suburbs. At the same time he was appalled by the growing obsession with committees and working groups the ‘essential urge’ to reach consensus before settling on a plan. To his mind, the best decision-making processes were those that challenged whatever default assumptions those within the organization were operating under.

For Whyte the tendency towards groupthink could be traced back the hiring practices of large firms. In particular he railed against the practice of filtering prospective employees through pseudoscientific personality tests which, he argued, mainly served to reward conformity and weed out any exceptional individuals. Instead of promoting conformity Whyte urged corporate leadership to put systems in place that would encourage dissenting opinions.

Over the years a number of methods have been devised to mitigate the effects of groupthink. RAND Corporation pioneered the ‘Delphi technique’ which ensures that participants in a discussion group remain anonymous so as to minimize personal biases. It also incorporated an adjudicator to ensure all relevant ideas were thoroughly interrogated. In setting up his SIGMA think-tank Stafford beer also tried to ensure a diversity of opinions by inviting all staff to participate in brainstorming sessions – including cleaners and clerical staff. Many of these techniques have become somewhat standard practise and well-moderated brainstorming sessions generaly include some way of providing anonymous feedback and someone whose role is to play ‘devil’s advocate’ to all suggestions.

*To be clear – in Janis’ reckoning – the victims of groupthink were the White House decision makers who copped all the criticism for their disastrous policies and not the Cuban counterrevolutionaries and Vietnamese peasants who copped all the bullets and shrapnel.

The Purpose Of a System Is What It Does (circa 1970)

How do you determine the purpose of a given system? It sounds like a simple question. An airport, for example, is a system for transferring passengers on and off aeroplanes. A car dealership is a system for selling vehicles to willing buyers. A welfare system is there to provide a social safety and the military is there to defend the nation. These all sound like reasonable descriptions of what these institutions are for and yet the purpose of a system can differ substantially depending on where you stand.

For most visitors to Melbourne the city’s airport is a transport hub but for the people who own the airport – Australia Pacific Airports Corporation Limited (APAC) – the task of facilitating travel is secondary to the task of collecting airline fees, leasing retail space in the terminals and extracting a ransom from anyone dumb enough to go into the parking lot. For as long as the airport has existed the Victorian government has had plans to connect the airport to the city via a dedicated railway line but this connection would take a bite out of APAC’s revenue so they have continuously lobbied the government to postpone any extension of the railway network to Tullamarine. Thus, to some extent, the purpose of Melbourne airport is to prevent Melbournians from getting to the airport by train.

The Melbourne Airport example is relatively simple – the people running the airport have a conflict of interest with the public at large. But government departments present a much thornier versions of this dilemma. According to Stafford Beer the most conflicted organisations (in terms of their underlying purpose) are those that provide social services. In The Heart of Enterprise (1979) he wrote that:

“Who knows what their nature, boundaries, and purposes are? Oh yes: we have a general idea. But the critical management problems continually defy proper definitions (and therefore any solutions that would be agreed to COUNT as solutions) because the nature of these systems is not at all agreed, their boundaries are extremely fuzzy, and they have as many (conflicting) purposes as there are (conflicting) observers.”

This range of objectives owes as much to historical circumstance as it does to shifting economic theories. Following the Second World War welfare systems in Great Britain and Australia were expanded to manage the massive economic upheavals resulting from demobilization. Over the following decades these systems were kept in place to stave off socialist political movements. More left-leaning governments have tried to use the welfare system to redistribute wealth – taxing rich people and giving the proceeds to the poor. More right-leaning government have tried to circumscribe welfare payments to discourage ‘welfare dependency’ .

According to the Services Australia website the purpose of Centrelink is ‘to support Australians by efficiently delivering high-quality, accessible services and payments on behalf of the government’. But if you apply the right level of cynicism Centrelink looks more like an instrument for disciplining the workforce. It may claim to support those who are unemployed or unable to work but, in its current form, it mainly serves to bury those people under pointless paperwork and demoralise them through a system of ‘mutual obligations’. That being the case, it shouldn’t really be considered a welfare system at all. Rather it should be understood as a system designed to discourage people from applying for welfare in the first place.

Because the exact purpose of an organisation can be slippery, Stafford Beer insisted that the only way to honestly evaluate a large organisation was by looking at what it actually produces. Hence, according to Beer, the Purpose of a System Is What It Does (POSIWID).

According to this formulae a system can never really be said to have ‘failed’. Whatever negative side-effects the system produces are, at the end of the day, simply what it does. This means that when a system consistently fails in its stated purpose (while continuing to operate) it must be succeeding at some alternate purpose.

As well as maintaining a certain degree of skepticism’s regarding the stated aims of an organization the POSIWID heuristic also suggests that any re-drafting of an organisation’s ‘mission statement’ or ‘company values’ is unlikely to have much effect on what it actually produces.

‘Regulatory Capture’ (circa 1980)

The term regulatory capture is fairly self-explanatory. It refers to a gradual form of corruption whereby an agency set up to regulate a certain industry slowly gets co-opted by the industry itself. The theory of regulatory capture is associated with economist George Stigler whose 1971 paper The Theory of Economic Regulation suggested that regulatory capture is the rule rather than the exception:

“…every industry or occupation that has enough political power to utilize the state will seek to control entry. In addition the regulatory policy will often be so fashioned as to retard the rate of growth for new firms”

Special interest groups don’t just lobby the government to reduce regulatory requirements. They also push for regulations that prevent new entrants into the market. This is a particular problem for Australia which has an economy that is dominated by duopolies (like Coles and Woolworths) and oligopolies (like the ‘Big Four’ banks). These organisations wield an enormous amount of political power and they use that power to lobby for favorable regulations.

During the banking Royal Commission the Australian Securities and Investments Commission (ASIC) was heavily criticized for not pursuing legal action against banks and super funds that had engaged in misconduct. The commission ultimately recommended that ASIC should separate its enforcement department from those that have day-to-day dealings with the banks. So far this recommendation has been ignored.

If inadequate oversight is one of the key risk factors for system failure then regulatory capture is one of the key reasons for inadequate oversight.

‘Bureaucratic Drift’ (circa 1980)

In the 1980s another term emerged to describe how organisations tend to stray from their intended purpose over time. This time it was political scientists that were trying their hand at systems analysis and their targets were meddling public servants. According to their theory of bureaucratic drift, elected officials pass legislation with certain objectives in mind but their intentions end up thwarted by the unelected bureaucrats tasked with implementing that legislation. They attributed this tendency to a combination of personal ideology, corruption, careerism and other human foibles. Given enough time, they argued, bureaucratic organisations will begin to reflect the preferences of the people running them.

For Stafford Beer the danger wasn’t so much that bureaucrats had ulterior motives. It was simply that staff in large organizations invariably found ways to entrench their positions at the expense of the organization’s goals. One way they did this was simply by employing more people to do the same amount of work.

English satirist C. Northcote Parkinson* devoted entire chapter of his book on bureaucratic absurdities to the proliferation of administrators. His calculations were based on staff numbers in the British Navy and its Colonial Office. Reviewing government employment records Parkinson observed that between 1914 and 1928, the British Navy had shed 40 of its capital ships even as it gained an extra 1,569 admiralty officials – an increase of 78%. Likewise he noted that the number of Britain’s colonial officials had swelled from 372 in 1935 to a whopping 1,661 by 1954 while the amount of territory actually governed by Britain had fallen by about 60% over that same period.

Based on these two examples, Parkinson’s back-of-the-napkin calculations suggested (only half jokingly) that middle-managers within any public administation would increase at a rate of between 5.17% and 6.56% year on year. However he was quick to avoid making any policy prescriptions on the basis of this trend.

“The discovery of this formula and of the general principles upon which it is based has, of course, no political value. No attempt has been made to inquire whether departments ought to grow in size. Those who hold that this growth is essential to gain full employment are fully entitled to their opinion. Those who doubt the stability of an economy based upon reading each other’s minutes are equally entitled to theirs.”

Reactionary sci-fi writer Jerry Pournelle, who moonlighted as a business consultant in the 1960s and 70s, came up with his own variant on this theme. Pournelle’s Iron Law of Bureaucracy states that:

“In any bureaucratic organization, there will be two kinds of people: First, there will be those who are devoted to the goals of the organization. Examples are dedicated classroom teachers in an educational bureaucracy, many of the engineers and launch technicians and scientists at NASA, even some agricultural scientists and advisors in the former Soviet Union collective farming administration. Secondly, there will be those dedicated to the organization itself. Examples are many of the administrators in the education system, many professors of education, many teachers union officials, much of the NASA headquarters staff, etc. The Iron Law states that in every case the second group will gain and keep control of the organization. It will write the rules, and control promotions within the organization.”

Pournelle’s choice of examples reveals his political bias but the phenomenon he observed applies just as well to corporate entities. In most large organizations a manager’s salary is generally dependent to the number of people that directly report to them. This basic equation creates an incentive to employ more staff even if there isn’t enough productive work for them to do. In his book Bullshit Jobs (2018) David Graeber described this phenomenon as ‘managerial feudalism’ – senior managers surrounding themselves with a retinue of ‘flunkies’ whose only job is to inflate that manager’s status and push them into a higher salary band.

In the ‘West’ financialisation of the economy has further contributed to this organisational bloat. In an address to a hacker conference in 2019 Graeber attempted to explain why the ‘professional managerial class’ seems to be immune from all the downsizing and restructing that businesses conduct in the name of organisational efficiency.

“Increasingly, profits aren’t coming from either manufacturing or from commerce, but rather from the redistribution of resources and rent: rent extraction. When you have a rent extraction system, it resembles feudalism much more than capitalism as normally described. If you’re taking a large amount of money and redistributing it, you want to soak up as much of that as possible in the course of doing so. And that seems to be the way the economy increasingly works. If you look at anything from Hollywood to the healthcare industry, what you see over the last thirty years is a creation of endless intermediary roles which grab a piece of the pie as it’s being distributed downwards.”

So what does bureaucratic drift and managerial bloat have to do with system failure? Theoretically having a whole bunch of underutilised staff should provide an organisation with a certain amount of redundancy. You could imagine a situation where a sudden crisis might suddenly require an army of reservist administrators. But the reality is that organisations with too many people struggle to respond quickly to sudden changes in circumstance. Not only that, but the diffusion of responsibility for routine work also translates into a diffusion of accountability for routine screw-ups.

*Parkinson is most well know for the axiom that ‘Work expands so as to fill the time available for its completion.’

‘Perverse Incentives’ (circa 2000)

In the early 2000s German economist Horst Siebert popularized the idea of perverse incentives – reward systems that produce unintended consequences. The example he gave was a (somewhat dubious) story of a bounty placed on venomous cobras by the British authorities in India during the 19th century. According to the story, the bounty system was a misguided effort to increase public safety but its main effect was to sponsor a nationwide cobra breeding industry.

This particular story might have been too good to be true but the underlying concept of perverse incentives is still a useful term for understanding how organisations drift off course. All organisations have metrics that they use to evaluate their progress towards some future goal. Often these are metrics are financial (quarterly profits or company share price) but they can also be ranking systems (like the NAPLAN test results used to assess school performance in Australia) or polls of public sentiment (many businesses use a ‘Net Promotor Score’ to gauge customer satisfaction).

Initially, metrics and KPIs help management track how their organisation is performing but, over time, they tend to go from being a proxy for the organization’s ultimate goal to being a goal unto themselves. The tendency was observed by economist Charles Goodhart who warned that when a measure becomes a target, it ceases to be a good measure.

The consequences of perverse incentive schemes are often hard to quantify because those consequences are, by definition, not the ones being measured. Senior managers might loudly advertise certain performance targets or enforce strict quotas to motivate operational staff but they must know, on some level, that blindly focusing on those targets increases the risk that staff will cut corners or engage in outright fraud.

One of the few television shows to really dwell on this phenomenon was David Simon and Ed Burns’ HBO miniseries The Wire which looked at various types of institutional dysfunction in the U.S. city of Baltimore. Season three, in particular, centered on the overwhelming pressure to reduce the crime rate as measured by the city’s CompStat* management system and how that pressure encouraged police officers to manipulate their reports. Season 4 showed how similar targets hamstrung the teachers working within Baltimore’s public education system. This tendency was brought home in a brief exchange between former police officer, turned high school teacher, Roland Pryzbylewski and his colleague Grace Sampson.

Pryzbylewski: I don’t get it. All this so we score higher on the state tests? If we’re teaching the kids the test questions, what is it assessing in them?

Sampson: Nothing. It assesses us. The test scores go up, they can say the schools are improving. The scores stay down, they can’t.

Pryzbylewski: Juking the stats.

Grace Sampson: Excuse me?

Pryzbylewski: Making robberies into larcenies. Making rapes disappear. You juke the stats, and majors become colonels. I’ve been here before.

Sampson: Wherever you go, there you are.

As the cobra example suggests, perverse incentives are accidental in nature. They represent a failure of imagination on the part of those in charge. But in every instance of system failure the question needs to be asked; how hard was it to imagine those downstream effects? To what extent should we hold leadership accountable for failing to think more than one step ahead?

*CompStat was a real police reporting system and the statistical manipulation that Burns and Simon dramatized in The Wire remains a very real phenomenon. The Crime Machine podcasts delves into this.

‘Criminogenic Organisation’ (circa 2000)

Perverse incentives, and policies which offer plausible deniability to those charge, are the key features of what fraud investigator William K. Black referred to as ‘criminogenic organisations’. Black used this term to describe companies that seemed to be optimized to do the wrong thing. What Black observed was that, in the absence of regulation, senior managers and CEOs had a free hand to restructure their organisation by removing safeguards and establishing incentive structures that encouraged fraud and other forms of criminal behaviour.

According to Black, the 2008 Financial Crisis was due, in large part, to this sort of ‘control fraud’. In the years leading up to the crisis senior executives at various banks and financial firms systemically removed the checks and balances that were supposed to keep their staff honest. These CEOs promoted irresponsible lending in order to hit revenue targets that boosted share prices and netted them lucrative bonuses.

Encouraging this sort of reckless lending without getting into legal strife requires a light touch. While a CEO might not be able to get away with sending out a company-wide email telling their employees to engage in accounting fraud they can effectively send the same message through their bonus plans.

The important thing to note criminogenic organistions is that they’re not necessarily a place that recruits ‘bad people’. Rather, they’re a machine for turning good people bad. As economist Dan Davies laid out in The Unaccountability Machine (2024).

“The cognitive dissonance caused by being a good person in a bad system is immense, and one way to reduce it is to stop being such a good person…All the top executives had to do was set unrealistic profitability targets and underinvest in legal departments and compliance systems. It wasn’t so much that anyone had told their traders what to do; more that nobody ever organised things in such a way that they wouldn’t form a criminal conspiracy.”

If there’s a scale of system failure that extends from ‘cock-up’ to ‘conspiracy’ then this sort of control fraud is well on the way to being a conspiracy.

‘Accountability Sink’ (circa 2020)

In The Unaccountability Machine Dan Davies makes an ambitious attempt to explain ‘why big systems make terrible decisions’. His contribution to our glossary of system failure is the concept of an ‘accountability sink’ – a process or policy designed to short-circuit disputes and deflect criticism. According to Davies, accountability sinks are an essential feature of any functioning bureaucracy and they come in all shapes and sizes. At small scale they manifest as company policies that close the door in your face. If you’ve tried to get a refund for a defective product only to be told that refunds don’t apply to items purchased during a sale period then you’ve encountered this sort of accountability sink.

To be effective the sink requires staff within an organisation to follow a pre-defined script while denying customers any avenue of appeal. According to Davies:

“…the crucial thing at work here seems to be the delegation of the decision to a rulebook, removing the human from the process and thereby severing the connection that’s needed in order for the concept of accountability to make sense”

But false avenues of appeal can be another form of accountability sink. If you’ve ever tried to dispute something with Centrelink then you’ll find yourself shunted between Centrelink’s internal complaints department, its formal review system and its compensation scheme. Each of these departments will refer you, in a circular fashion, to one another until you decide to escalate your complaint to the Commonwealth Ombudsman who will refer you to the Australian Information Commissioner who will suggest that you appeal the Centrelink’s decision through the Administrative Review Tribunal. For their part the ART will regretfully explain that their hands are tied by the Act of Parliament that they operate under. They’ll suggest that you take the matter to Federal Court – at which point you’ll give up, having spent more time and effort pursuing the complaint than the complaint was worth in the first place. In concert all these organisations act as one big accountability sink to prevent people from contesting the sorts of routine administrative failures that occur when organisations rely on rigid policies.

There are, of course, good reasons to establish strict policies and rulebooks. Modern states are made up of millions of people with complex needs and competing priorities so we need ways of triaging complaints, collating feedback and making decisions automatically. Take loan applications as an example. As frustrating as it might be to find yourself at the mercy of opaque credit-rating calculations and arbitrary income tests it’s obvious that some sort of system is necessary to determine eligibility. You can’t run a bank by having customer service staff weigh up the merits of each loan applicant according to their personal intuition. Any process that relied on these sorts of subjective assessments would quickly grind to a halt and applicants would inevitably be rejected due to prejudices on the part of the assessor. Setting down rules and making staff stick to those rules is the only way to ensure a measure of fairness.

As Felix Martin wrote in a review of Davies’ book for the Financial Times:

“Seen from another perspective, accountability sinks are entirely reasonable responses to the ever-increasing complexity of modern economies. Standardisation and explicit policies and procedures offer the only feasible route to meritocratic recruitment, consistent service and efficient work. Relying on the personal discretion of middle managers would simply result in a different kind of mess.”

The problem is that when unforeseen circumstances arise, these rigid processes can produce obscene outcomes. By way of example Davies cites an incident that took place in 1999 when 440 pet squirrels turned up at Amsterdam’s Schiphol airport. When it became apparent that the paperwork accompanying the animals was incorrect the staff for Dutch airline KLM followed company policy and fed the squirrels into an industrial poultry shredder. This story inevitably came out in the Dutch press and KLM’s leadership was forced to issue a public apology for their handling of the situation while still insisting that their staff had acted ‘formally correctly’. No one was fired because no one could work out who to blame for establishing the policy in the first place. As Davies writes:

“The property of there being ‘nobody to blame’ is the definition of what constitutes an accountability sink. It’s not clear what KLM should have done when faced with a consignment of 400 squirrels from a breeder who refused to obey the import regulations. The best solution would have been to refuse to load the cargo in Beijing, but the plane had already flown. Tragically, the decision to put them down and then bear the public opprobrium might even have been correct. But making the specific decision to kill the squirrels would have been much less psychologically tolerable for the policy-making managers than simply creating a system which ensured that they would be shredded unless a lowly employee disobeyed a specific order. In some ways, a disaster like the Schiphol squirrel episode can be seen as the policy mechanism providing one of its intended functions – acting like a car’s crumple-zone to shield any individual manager from a disastrous decision”

If you’re anything like me you’ll find the idea that no one is to blame for these failures a little far fetched. I suspect that what Davies actually means is that, in most of these cases, responsibility was diffused to such an extent that it would be unfair to selectively prosecute any individual.

At the heart of Davies’ thesis is the cybernetic notion that sufficiently large organizations are a sort of artificial intelligence. He argues that, beyond a certain threshold, these systems can no longer be understood by a single person even if that person dedicates their entire life to the task. The best we can hope to do is keep an eye on the inputs and outputs.

“Nobody I’ve talked to really believes that Boeing is an intelligent being in the sense of being conscious and having independent will. But it’s not just a metaphor; it’s a simplifying assumption which is appropriate in many contexts. When Boeing does something – say, delivers an aeroplane like the 737 MAX with a fundamental flaw that causes it to crash in specific common circumstances, it’s usually more sensible to say that it was Boeing that did this than to take either of the alternative approaches – making a list of all the different decisions that went into that decision, or finding some poor middle management body and pinning all the responsibility on them.”

Strangely, it never occurs to Davies that it might be worthwhile to pin the blame on Boeing’s senior management.

Perhaps it’s unfair to blame those in the C-suite for failures resulting from murky processes that pre-date their tenure or to decisions made on the factory floor, but that sort of accountability is supposed to be part of the job. To paraphrase Uncle Ben; with great salary comes great responsibility.

At several points in the book Davies brings up the 2008 global financial crisis only to insist that this disaster was the result of factors well outside the control of any single person or any group of people. Given that Davies was working as an analyst for Credit Suisse at the time I suspect he is trying to convince himself of this fact as much as his audience. Tellingly, the original title of his book was ‘Decisions Nobody Made’.

Davies might consider it unfair to make examples of a few banking executives for an industry-wide failure but the danger of designating scapegoats has to be weighed against the danger of letting everyone involved off the hook. In the absence of real reforms and regulations the least we can do is inject a little anxiety into the upper ranks of the financial sector. Fear of legal consequences might be the only thing that stands between amoral bankers and brokers and another catastrophic financial collapse.

The devil’s advocate might argue that assigning blame for systemic failures to corporate executives or government ministers might lead to some form of paralysis – an unwillingness on the part of those in charge to approve any changes or take any risks. Faced with the possibility of real consequences (be that loss of status or legal penalties) the people in charge might just stop making decisions – leaving us with leaderless organisations that blunder their way from from crisis to crisis. Maybe a certain degree of legal immunity is simply necessary in order to run a large organization.

Maybe that’s the case. But we’ll never know unless we try making people accountable for the machines they’re in charge of.

There’s an excerpt from a training manual that IBM published in 1979. It states, in block letters;

“A COMPUTER CAN NEVER BE HELD ACCOUNTABLE.

THEREFORE A COMPUTER MUST NEVER MAKE A MANAGEMENT DECISION”

Fifty years down the track this statement is beginning to sound somewhat controversial. Faced with an increasingly complex world people like Davies have decided it might be necessary to surrender our responsibility for making decisions to some higher intelligence – whether that be a faceless bureaucracy, an AI algorithm or the collective intelligence of the free market.

“In the future, as well as being made by unaccountable black box systems, important decisions are going to be made by actual robots. This isn’t a choice we’re faced with, unfortunately. It’s just a consequence of things getting more complicated, and passing the threshold at which they have to be analysed as a whole. We cannot afford the luxury of explainability; we can’t keep on demanding that an identifiable human being is available to blame when things go wrong.”

For someone so immersed in the radical optimism of cybernetics there’s a real fatalism to The Unaccountability Machine. Ultimately Davies doesn’t see any hope of inserting accountability back into the machinery of state. The policies have been established, the metrics are set, the accountability sinks are in place and none of it is going away. The last line of the book calls to mind Orwell’s dark vision of ‘a boot stamping on a human face – forever’.

“In the future, ‘I blame the system’ is something we will have to get used to saying, and meaning it literally.”

Needless to say this is not a sentiment that Stafford Beer would approve of.

In the 2nd edition of his opus, Brain of the Firm, Beer attempted to distill the lessons he had learned assisting the socialist government of Salvador Allende in Chile. One of those principles was to ensure there was always a ‘name and a face’ behind every government decision. The working class of Chile were accustomed to being sidelined and neglected by government officials but Beer insisted that public servants approach every problem with the attitude of “I will fix it, or I know who can.”

The truth is that we can decide to make better systems. We can decide what metrics we use. We can provide real avenues of appeal. We can regulate things properly. We can build more accountability into our systems and we can insist that the people in charge consider the downstream effects of their decisions.

All these things are easier to achieve than the pessimists would have us believe because the truth is that most people are already onboard with the idea that they have social responsibilities.

None of the people in the organisations mentioned above wanted the ‘bad outcome’ to happen. KLM executives don’t get off on slaughtering squirrels. Boeing executives don’t want their planes to plow into the ocean. The people who work in the finance sector don’t want to periodically devastate the economy and the people working for Centrelink don’t want to inflict misery on their fellow citizens.

In 1970, in an address to attendees at a conference on operational research, Stafford Beer ended his speech by making a pronouncement that was both despairing and hopeful.

“The systems we have already started, which we nourish and foster, are grinding society to powder. It might sound macabre to suggest that computers will finish the job of turning this planet into a paradise after human life has been extinguished.

“But that vision is little more macabre than the situation we already have, when we sit in the comfort of affluent homes and cause satellites to transmit to us live pictures of children starving to death and human beings being blown to pieces.

I have often heard it said by the most elevated people in this land that the capacity to do this kind of work does not exist. That is not true. In so far as the capacity may be inaccessible at the minute, I have the temerity to say to these elevated personages:

You drove it away: you bring it back.”

Notes:

William H. Whyte (1956) – The Organization Man

C. Northcote Parkinson (1956) – Parkinson’s Law or The Pursuit of Progress

Malcolm W. Hoag (1956) – An Introduction to Systems Analysis

Stafford Beer (1970) – Operational Research as Revelation

Stafford Beer (1972) – Brain of the Firm (2nd Edition)

Janis Irving (1972) – Victims of Groupthink

Stafford Beer (1973) – Designing Freedom

Stafford Beer (1979) – The Heart of Enterprise

McNollgast (1987) – Administrative Procedures As Instruments of Political Control

Tom Baker (1996) – On the Geneology of Moral Hazard

Horst Siebert (2001) – Der Kobra-Effekt

William K. Black (2015) – Economic Ideology and the Rise of the Firm as a Criminal Enterprise

Daniel Ziffler (2019) – A Wunch of Bankers

David Graeber (2019) – From Managerial Feudalism to the Revolt of the Caring Classes

Oliver Pol, Todd Bridgman (2022) – Recovering William H Whyte as the founder and future of groupthink research

Axel Trujillo (2023) – Project Cybersyn: How a government almost controlled the economy from a control room

Felix Martin (2024) – The Unaccountability Machine — why do big systems make bad decisions?

Dan Davies (2024) – The Unaccountability Machine

Dan Davies & Andrew Hill (2025) – Transcript: Why do companies make terrible decisions?

Leave a Reply